ETL异构数据源Datax_工具部署_02

接上一篇:(企业内部) ETL异构数据源Datax_部署前置环境_01

https://gblfy.blog.csdn.net/article/details/118081253

文章目录

- 一、直接下载DataX

- 二、下载DataX源码,自己编译

- 2.1.下载DataX源码

- 2.2. 通过maven打包

- 三、配置示例

- 3.1. 创建作业的配置文件(json格式)

- 3.2. 根据模板配置json

- 3.3. 执行测试

一、直接下载DataX

:DataX下载地址

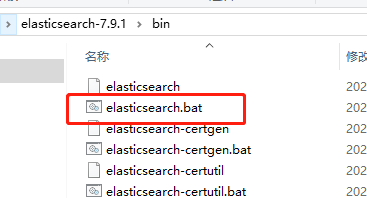

下载后解压至本地某个目录,进入bin目录,即可运行同步作业:

tar zxvf datax.tar.gzcd { YOUR_DATAX_HOME}/binpython datax.py { YOUR_JOB.json}

自检脚本:

python { YOUR_DATAX_HOME}/bin/datax.py { YOUR_DATAX_HOME}/job/job.json

二、下载DataX源码,自己编译

DataX源码

2.1.下载DataX源码

git clone git@github.com:alibaba/DataX.git

2.2. 通过maven打包

cd { DataX_source_code_home}mvn -U clean package assembly:assembly -Dmaven.test.skip=true

打包成功,日志显示如下:

[INFO] BUILD SUCCESS[INFO] -----------------------------------------------------------------[INFO] Total time: 08:12 min[INFO] Finished at: 2021-12-13T16:26:48+08:00[INFO] Final Memory: 133M/960M[INFO] -----------------------------------------------------------------

打包成功后的DataX包位于 {DataX_source_code_home}/target/datax/datax/ ,结构如下:

bin conf job lib log log_perf plugin script tmp

三、配置示例

从stream读取数据并打印到控制台

3.1. 创建作业的配置文件(json格式)

可以通过命令查看配置模板:python datax.py -r { YOUR_READER} -w { YOUR_WRITER}cd { YOUR_DATAX_HOME}/binpython datax.py -r streamreader -w streamwriter----------------------------------------------------------# 查看常用作业的配置文件模板python datax.py -r streamreader -w streamwriterpython datax.py -r oraclereader -w mysqlwriterpython datax.py -r mysqlreader -w oraclewriter控制台输出```bashDataX (DATAX-OPENSOURCE-3.0), From Alibaba !Copyright (C) 2010-2017, Alibaba Group. All Rights Reserved.Please refer to the streamreader document:https://github.com/alibaba/DataX/blob/master/streamreader/doc/streamreader.mdPlease refer to the streamwriter document:https://github.com/alibaba/DataX/blob/master/streamwriter/doc/streamwriter.mdPlease save the following configuration as a json file and usepython { DATAX_HOME}/bin/datax.py { JSON_FILE_NAME}.jsonto run the job.{"job": {"content": [{"reader": {"name": "streamreader","parameter": {"column": [],"sliceRecordCount": ""}},"writer": {"name": "streamwriter","parameter": {"encoding": "","print": true}}}],"setting": {"speed": {"channel": ""}}}}

3.2. 根据模板配置json

vim stream2stream.json添加内容如下:{"job": {"content": [{"reader": {"name": "streamreader","parameter": {"sliceRecordCount": 10,"column": [{"type": "long","value": "10"},{"type": "string","value": "hello,你好,世界-DataX"}]}},"writer": {"name": "streamwriter","parameter": {"encoding": "UTF-8","print": true}}}],"setting": {"speed": {"channel": 5}}}}

3.3. 执行测试

启动DataX

cd { YOUR_DATAX_DIR_BIN}python datax.py ./stream2stream.json同步结束,显示日志如下:...2021-06-23 09:43:14.869 [job-0] INFO StandAloneJobContainerCommunicator - Total 50 records, 950 bytes | Speed 95B/s, 5 records/s | Error 0 records, 0 bytes | All Task WaitWriterTime 0.000s | All Task WaitReaderTime 0.000s | Percentage 100.00%2021-06-23 09:43:14.872 [job-0] INFO JobContainer -任务启动时刻 : 2021-06-23 09:43:04任务结束时刻 : 2021-06-23 09:43:14任务总计耗时 : 10s任务平均流量 : 95B/s记录写入速度 : 5rec/s读出记录总数 : 50读写失败总数 : 0

还没有评论,来说两句吧...