hadoop实践(三)hadoop 集群配置

第一步: 集群规划

master 192.168.136.140

slave1 192.168.136.151

slave2 192.168.136.152

第二步:配置环境变量:

1) vi /etc/profile 在最后面添加如下代码:

export JAVA_HOME=/usr/java/jdk1.7.0_80export JAVA_BIN=/usr/java/jdk1.7.0_80/binexport PATH=$PATH:$JAVA_HOME/binexport CLASSPATH=.:$JAVA_HOME/lib/dt.jar:$JAVA_HOME/lib/tools.jarexport JAVA_HOME JAVA_BIN PATH CLASSPATHexport HADOOP_HOME=/opt/hadoop/hadoop-2.6.4export PATH=$PATH:$JAVA_HOME/bin:$HADOOP_HOME/bin:$HADOOP_HOME/sbin:</span></span>

使之生效:[root@master dfs]# source /etc/profile

2)vi /etc/hosts文件 在最后面添加如下内容:

[root@master dfs]# vim /etc/hosts

127.0.0.1 localhost

192.168.136.140 master.hadoop

192.168.136.151 slave1.hadoop

192.168.136.152 slave2.hadoop

第三步: 配置hadoop (master 192.168.136.140)

使用hadoop用户, 进入目录/opt/hadoop/hadoop-2.6.4/etc/hadoop

1) [hadoop@master hadoop]$ vim core-site.xml

<?xml version=”1.0” encoding=”UTF-8”?>

<?xml-stylesheet type=”text/xsl” href=”configuration.xsl”?>

2) vim hadoop-env.sh 和 yarn-env.sh 在开头添加如下环境变量(一定要添加切勿少了)

export JAVA\_HOME=/usr/java/jdk1.7.0\_80

3)vim hdfs-site.xml

<?xml version=”1.0” encoding=”UTF-8”?>

<?xml-stylesheet type=”text/xsl” href=”configuration.xsl”?>

4) vim mapred-site.xml

<?xml version=”1.0”?>

<?xml-stylesheet type=”text/xsl” href=”configuration.xsl”?>

5)vim yarn-site.xml

<?xml version=”1.0”?>

第五步 单机验证

1)如果没有启动进程(start-all.sh)

执行: [hadoop@master sbin]$ hadoop dfsadmin -report

正常是报告连接不上端口:9000

16/06/12 15:28:41 DEBUG ipc.Client: Connecting to master.hadoop/192.168.136.140:9000

16/06/12 15:28:41 DEBUG ipc.Client: closing ipc connection to master.hadoop/192.168.136.140 Connection refused

Connection refused

java.net.ConnectException: Connection refused

at sun.nio.ch.SocketChannelImpl.checkConnect(Native Method)

at sun.nio.ch.SocketChannelImpl.finishConnect(SocketChannelImpl.java:744)

at org.apache.hadoop.net.SocketIOWithTimeout.connect(SocketIOWithTimeout.java:206)

at org.apache.hadoop.net.NetUtils.connect(NetUtils.java:530)

2) 进程已经启动(start-all.sh)

执行: [hadoop@master sbin]$ hadoop dfsadmin -report

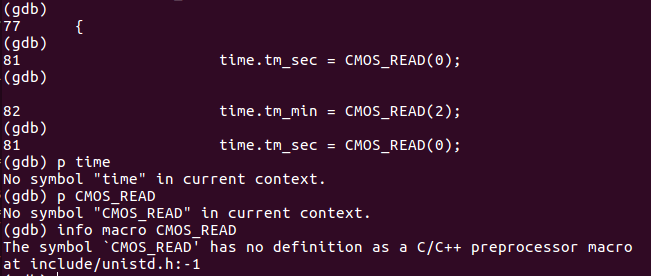

补充说明: 本人系统,上面1)情况下在执行时,开始并没有认识到进程没有启动,排查中发现另外一个问题:

补充-1)增加debug调试

[hadoop@master hadoop-2.6.4]$ export HADOOP_ROOT_LOGGER=DEBUG,console

[hadoop@master hadoop-2.6.4]$

[hadoop@master hadoop-2.6.4]$ hadoop dfsadmin -report

。。。。

16/06/12 12:59:11 DEBUG util.NativeCodeLoader: Failed to load native-hadoop with error: java.lang.UnsatisfiedLinkError: /opt/hadoop/hadoop-2.6.4/lib/native/libhadoop.so.1.0.0: /lib64/libc.so.6: version `GLIBC_2.14’ not found (required by /opt/hadoop/hadoop-2.6.4/lib/native/libhadoop.so.1.0.0)

。。。。。

注意到这里有 `GLIBC_2.14’ not found

补充-2) 查看系统版本:

[hadoop@master hadoop-2.6.4]$ ll /lib64/libc.so.6

lrwxrwxrwx. 1 root root 12 Apr 28 14:43 /lib64/libc.so.6 -> libc-2.12.so

因此,去查找解决办法:

坑啊!!!

补充-3)解决

到网站http://ftp.gnu.org/gnu/glibc/

下载glibc-2.14.tar.bz2

下载glibc-linuxthreads-2.5.tar.bz2

[root@master soft]# tar -jxvf /home/hadoop/soft/glibc-2.14.tar.bz2

[root@master soft]# cd glibc-2.14/

[root@masterglibc-2.14]# tar -jxvf /home/hadoop/soft/glibc-linuxthreads-2.5.tar.bz2

[root@master glibc-2.14]# cd .. #必须返回上级目录

[root@master glibc-2.14]# export CFLAGS=”-g -O2” #加上优化开关,否则会出现错误

[root@master soft]# ./glibc-2.14/configure —prefix=/usr —disable-profile —enable-add-ons —with-headers=/usr/include —with-binutils=/usr/bin

[root@master soft]# make #编译,执行很久,可能出错,出错再重新执行

[root@master soft]# make install #安装,必须root用户执行

#验证版本是否升级

[root@master soft]# ll /lib64/libc.so.6

lrwxrwxrwx 1 root root 12 Jun 25 02:07 /lib64/libc.so.6 -> libc-2.14.so #显示2.14

注意两台slaves也需要如法炮制

第六步:集群配置

1) master上

进入/opt/hadoop/hadoop-2.6.4/etc/hadoop目录, hadoop用户

[hadoop@master hadoop]$ vim slaves

slave1.hadoop

slave2.hadoop

2)登录:master将hadoop整个的copy到另外两台机器上面

scp -r /opt/hadoop/hadoop-2.6.4 192.168.136.151:/opt/hadoop/hadoop-2.6.4

scp -r /opt/hadoop/hadoop-2.6.4 192.168.136.152:/opt/hadoop/hadoop-2.6.4

3)配置两位两台机器上面的下面两个文件配置。

/etc/hosts

/ets/profile

配置内容和master一致

4)将三台机器的防火墙关闭掉:

root用户下:service iptables stop

到此:整个三台Hadoop的集群的机器算是配置完成了;

第七步:集群验证

master上验证:

[hadoop@master hadoop]$ jps

119213 ResourceManager

119544 Jps

118968 NameNode

说明:本机一开始NameNode 没有,显然是有问题,后面解决(解决方法看后面补充)

slave1 上验证:

[hadoop@slave1 hadoop-2.6.4]$ jps

59295 Jps

59045 DataNode

59150 NodeManager

slave2 上验证:

[hadoop@slave2 hadoop]$ jps

59201 NodeManager

59096 DataNode

59345 Jps

登录web验证:

http://192.168.136.140:8088/cluster/nodes

http://192.168.136.140:50070/dfshealth.html\#tab-overview

这两个网址能顺利打开,就表示ok

+++++++++++++++++++++++++++++++++++++

补充一:NameNode 没有正常启动的解决

首先查log:

2016-06-12 13:25:46,764 FATAL org.apache.hadoop.hdfs.server.namenode.NameNode: Failed to start namenode.

org.apache.hadoop.hdfs.server.common.InconsistentFSStateException: Directory /usr/hadoop/dfs/name is in an inconsistent state: storage directory does not exist or is not accessible.

at org.apache.hadoop.hdfs.server.namenode.FSImage.recoverStorageDirs(FSImage.java:314)

at org.apache.hadoop.hdfs.server.namenode.FSImage.recoverTransitionRead(FSImage.java:202)

at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.loadFSImage(FSNamesystem.java:1022)

/usr/hadoop/dfs/name 看来是没有,手工验证一下,确实不会自己创建,有点弱智。重新创建这该用户。

再次启动,还是报错,但是错误变化了:

2016-06-12 15:20:04,608 INFO org.apache.hadoop.hdfs.server.common.Storage: Lock on /usr/hadoop/dfs/name/in_use.lock acquired by nodename 116900@master.hadoop

2016-06-12 15:20:04,611 WARN org.apache.hadoop.hdfs.server.namenode.FSNamesystem: Encountered exception loading fsimage

java.io.IOException: NameNode is not formatted.

at org.apache.hadoop.hdfs.server.namenode.FSImage.recoverTransitionRead(FSImage.java:212)

at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.loadFSImage(FSNamesystem.java:1022)

at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.loadFromDisk(FSNamesystem.java:741)

NameNode is not formatted. 于是格式化一下,具体命令:

[hadoop@master hadoop-2.6.4]$ bin/hadoop namenode -format

DEPRECATED: Use of this script to execute hdfs command is deprecated.

Instead use the hdfs command for it.

16/06/12 15:25:49 INFO namenode.NameNode: STARTUP_MSG:

/************************************************************

STARTUP_MSG: Starting NameNode

STARTUP_MSG: host = master.hadoop/192.168.136.140

STARTUP_MSG: args = [-format]

STARTUP_MSG: version = 2.6.4

。。。。。

最后再次重新启动 start-all.sh 注意格式化前最好先stop-all.sh

+++++++++++++++++++++++++++++++++++++

补充二: master 执行jps 只看到2个进程

[hadoop@master hadoop]$ jps

119213 ResourceManager

119544 Jps

118968 NameNode

缺少了secondarynamenode进程,排除了好久,才发现start-all.sh里面就没有secondarynamenode启动项目,补充后执行正常启动,再次执行jps

[hadoop@master ~]$ jps

3303 ResourceManager

3871 Jps

3677 SecondaryNameNode

3058 NameNode

hadoop-daemons.sh —config $HADOOP_CONF_DIR —hosts secondarynamenode start secondarynamenode

还没有评论,来说两句吧...